Anthropic Draws Investor Offers at Over $800 Billion Value | Bloomberg Tech 4/15/2026

THE SO WHAT

Investors waving >$800B valuations at Anthropic — and getting rebuffed — shows frontier labs now see capital as a constraint on control, not on growth. If you’re an enterprise buyer, assume model access, roadmap, and safety posture will be shaped more by these governance choices than by who offers the highest check.

READ THE SOURCE

MORE FROM THE WIRE

Applied AI

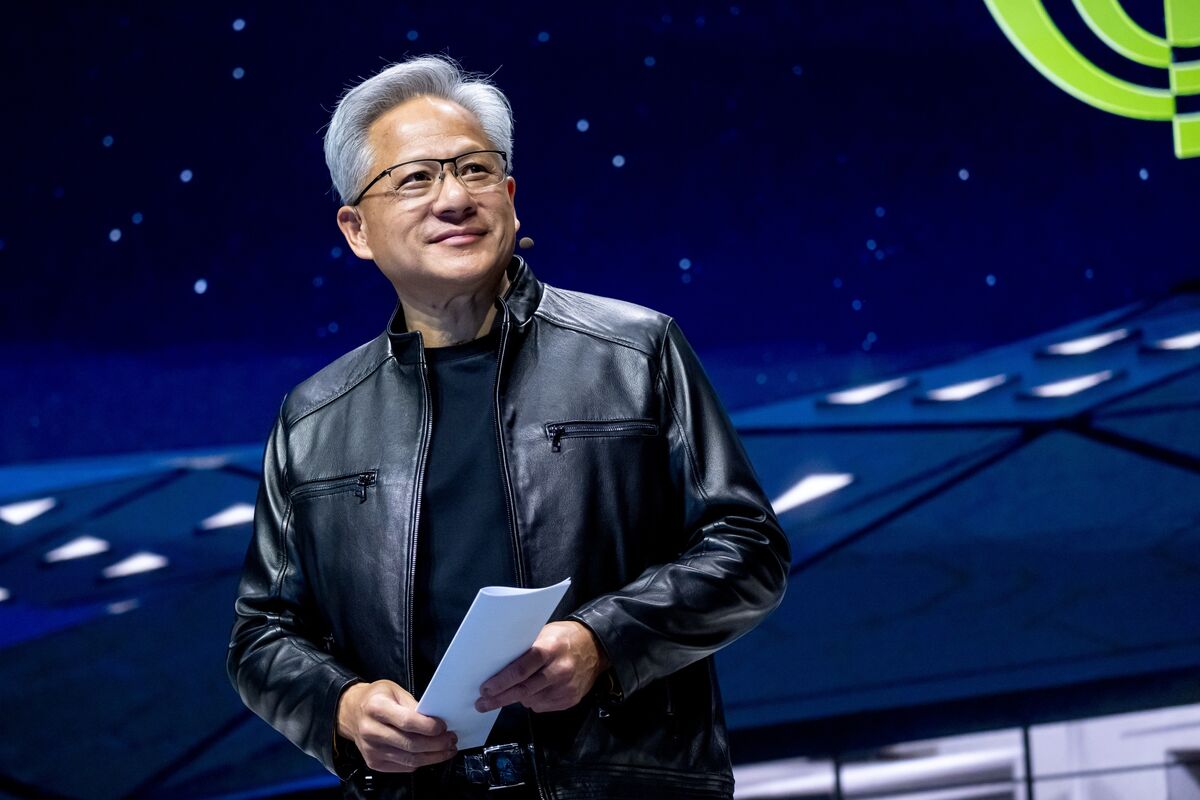

Applied AINvidia’s Huang Says Mythos Shows Need for US-China AI Dialogue

When Jensen Huang is using Anthropic’s Mythos to argue for US–China AI coordination, he’s saying the real systemic risk is regulatory divergence, not just model behavior. If you operate cross-border, assume AI policy will be negotiated at the same level as trade and chips—and design your data, hiring, and partnership footprint so you’re not hostage to a single bloc’s rules.

Applied AI

Applied AISources: Apple plans to send a significant part of its Siri team, known as a laggard, to an AI coding bootcamp; the group is expected to be fewer than 200 (The Information)

Sending <200 Siri engineers to an AI coding bootcamp two months before a major launch is a public admission that legacy assistant stacks are structurally behind LLM-native ones. If your core product depends on an older ML codebase, treat retraining and codebase refactors as urgent operational work, not a side project for “innovation teams.”

Applied AI

Applied AICanada’s Champagne to Discuss Anthropic at Meeting With Bessent

When a finance minister plans to raise Anthropic’s Mythos model in a bilateral with the US Treasury Secretary, frontier models are now macro variables, not just tech products. Expect capital, risk, and regulatory discussions around AI to move into finance ministries—if you’re deploying large models at scale, your counterparty is shifting from CIOs to regulators and treasury officials.

Applied AI

Applied AIIs this the tipping point for AI at work? New Gallup survey finds half of all US employees now use it in some way

If half of US employees are already touching AI tools, the constraint is no longer access—it’s governance, workflow design, and verification. Stop piloting and start standardizing: pick your stack, define allowed use cases, and instrument quality checks before shadow AI ossifies into your real operating model.