Sam Altman opens up about the Molotov cocktail attack on his home: 'The way Anthropic talks about OpenAI doesn't help'

THE SO WHAT

Personal security risk for AI leadership is now entangled with inter-lab narrative warfare — 'doomerism' and 'fear-based marketing' aren’t just PR frames, they shape who gets targeted in the real world. Boards and comms teams need to treat safety rhetoric as part of physical risk management, not just positioning.

READ THE SOURCE

MORE FROM THE WIRE

Applied AI

Applied AIUnauthorized group has gained access to Anthropic’s exclusive cyber tool Mythos, report claims

Advanced LLMs are now espionage targets in their own right — Mythos leaking into a private Discord channel shows your model endpoints and eval sandboxes are part of your security perimeter. If you're piloting sensitive models, treat access control, logging, and key rotation as production-grade from day one, not after the "real" launch.

Applied AI

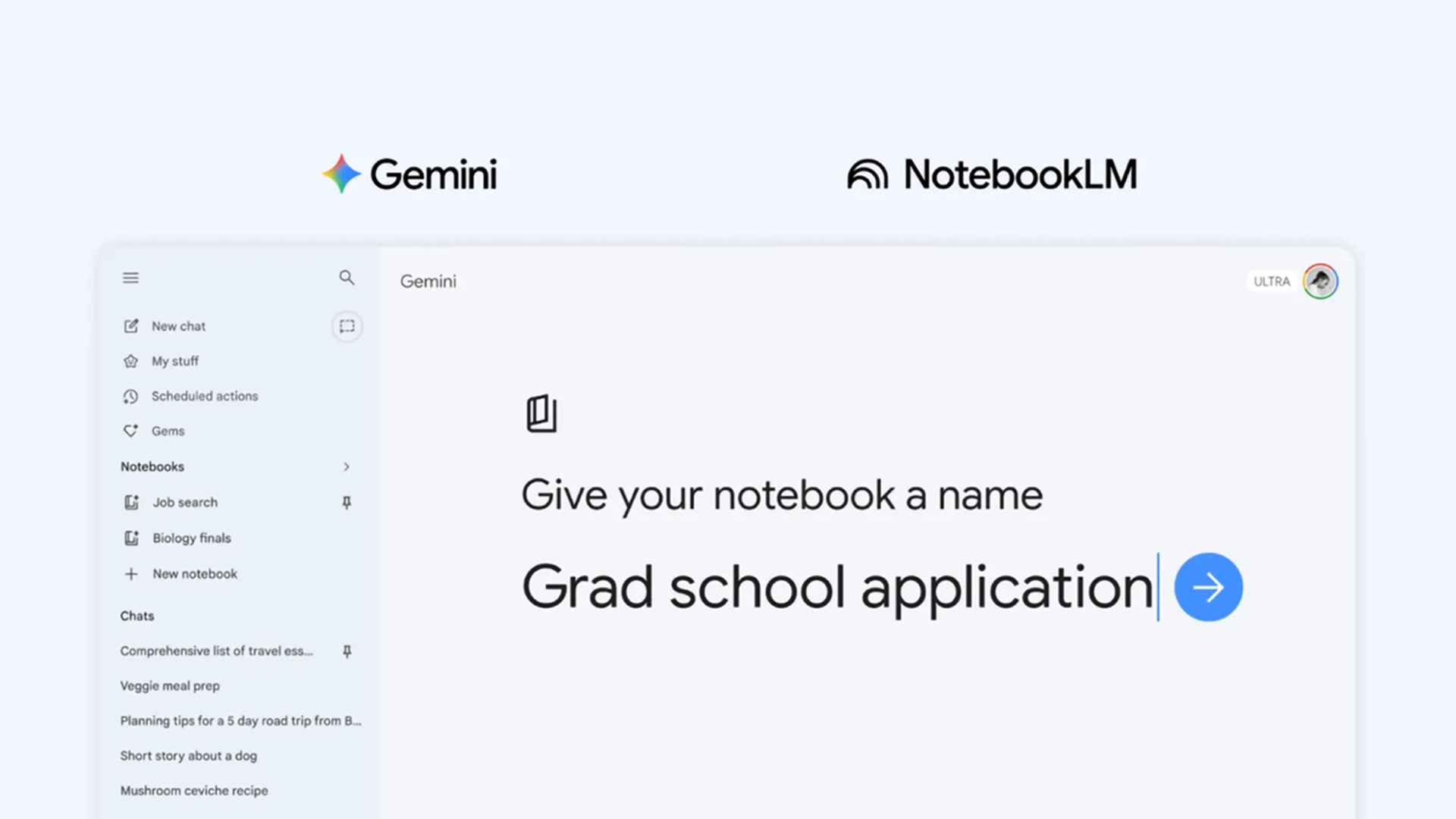

Applied AI5 tips for using Gemini’s new Notebook feature

Gemini Notebook is another proof point that the default UX for AI is shifting from chat to persistent workspaces. If your product still treats AI as a modal assistant, start designing for long-lived documents, state, and collaboration or you'll lose usage to tools that remember context by default.

Applied AI

Applied AIAdobe Expands Agentic AI Ecosystem With Big Tech Partners

Adobe is turning its creative and document surface area into an agent routing layer for OpenAI, Anthropic, Google, Nvidia and Amazon — not trying to win the model war, but to own the workflow. If your product lives inside Adobe’s orbit, assume your users will expect agent handoffs and cross-tool automation as a default UX, not a premium feature.

Applied AI

Applied AISource: a handful of unauthorized users in a private Discord channel have been accessing Anthropic's Mythos model since the day the company announced it (Rachel Metz/Bloomberg)

A frontier-grade model with offensive cyber capabilities leaking into a private Discord is the nightmare scenario for every lab and enterprise running sensitive models. Treat access control, logging, and key management for high-capability models like you would production payment rails — not like another SaaS login.