Snowflake Seeing Strong Return on AI Investment: CEO

THE SO WHAT

When a CEO says coders are producing work “24 hours a day” via AI agents, that’s a staffing and governance statement—your throughput is now bounded by review, not typing. If you don’t redesign code review, testing, and deployment for agentic output, you’re either leaving leverage on the table or compounding hidden tech debt.

READ THE SOURCE

MORE FROM THE WIRE

Applied AI

Applied AIUK NHS chief champions Palantir’s 'outstanding results’ in England, pushes for deeper rollout despite growing staff concerns

When a system as politically sensitive as the NHS pushes deeper into Palantir despite staff backlash over access to 1.5M personnel records, it's a clear tell that operational outcomes are trumping reputational risk. If you're selling AI/data platforms into regulated sectors, design for governance theater and real control — staff trust is now a deployment constraint, not a PR issue.

Applied AI

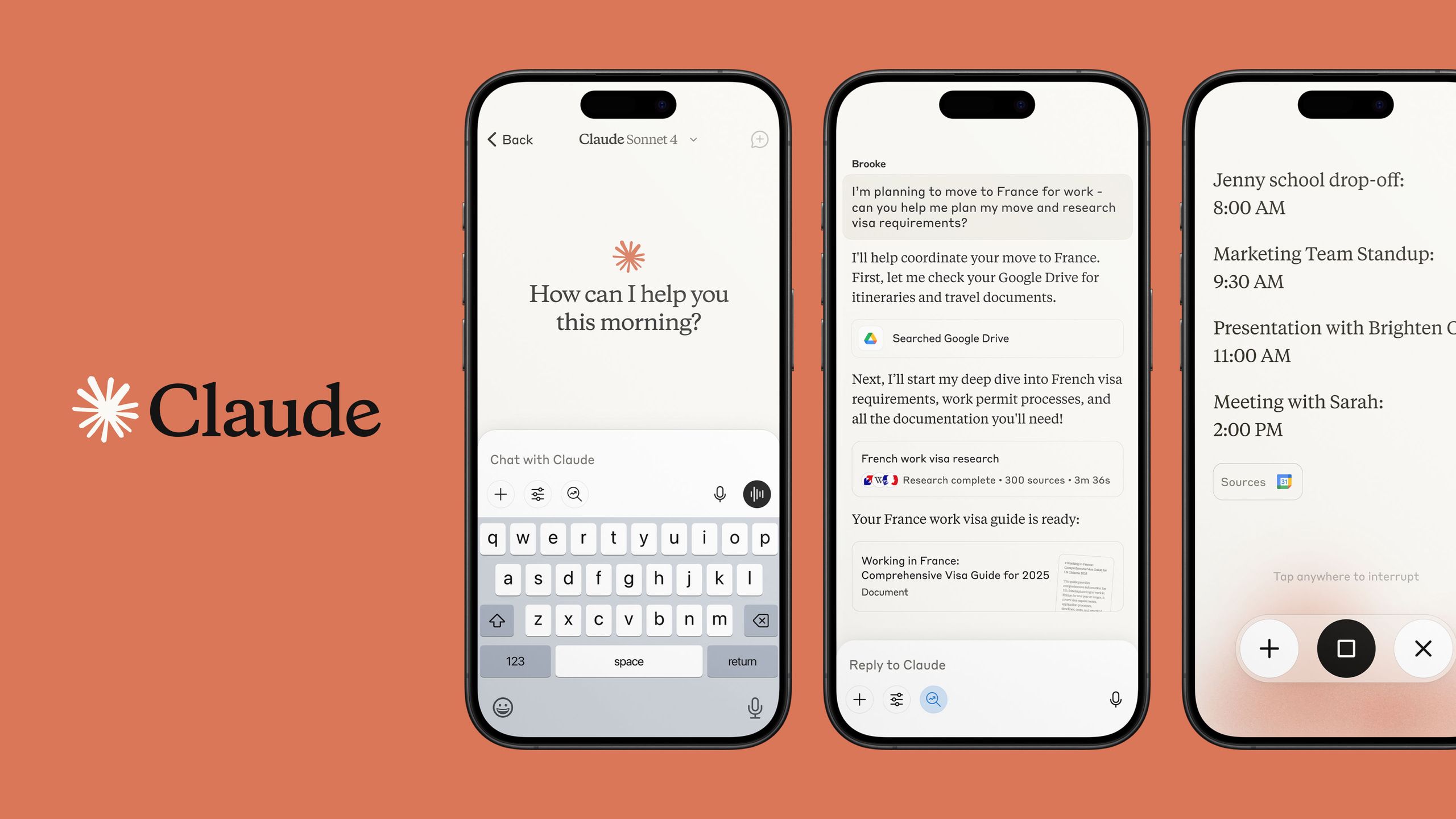

Applied AI'Go from prototype to launch in days rather than months': Anthropic reveals Claude Managed Agents, promises to make agent building '10x faster'

Agent platforms that promise 10x faster build‑to‑launch are collapsing the moat around “we built an agent” — the differentiation moves to proprietary data, integration depth, and domain workflows. If your roadmap is a thin wrapper on managed agents, assume your advantage has a 6–12 month half‑life and start investing in owned systems and distribution now.

Applied AI

Applied AIThe AI industry’s race for profits is now existential

When analysts talk about an AI monetization cliff, they're really saying the subsidy era is ending — models need unit economics, not just benchmarks. If you're building on underpriced APIs, assume those rates are temporary and design your stack so you can swap providers or bring workloads in‑house without blowing up your P&L.

Applied AI

Applied AIGoogle and Intel expand their partnership to deploy Intel's Xeon 6 chips and co-develop custom Infrastructure Processing Units to improve computing efficiency (Zaheer Kachwala/Reuters)

Custom IPUs tied to Xeon 6 mean more AI workload offload from general-purpose CPUs — the real game is carving the data center into specialized lanes for inference, networking, and storage. If you're building infra-adjacent software, assume heterogeneous accelerators and smart NICs are the default target, not a niche.